Stop Being Your AI Agent's Assistant

Everyone on Twitter has a fancy AI coding setup. Multi-agent orchestration, custom frameworks, elaborate prompt chains. It looks impressive. It’s also exhausting to watch.

When we kicked off code.umans.ai at the beginning of February, my cofounder Naji and I saw it as an opportunity to challenge ourselves. We had unlimited LLM access now. No more token anxiety. So we asked: what happens if we really let our agents work autonomously? Not in theory. In practice, on a real product, with real users.

Here’s what we learned.

The Trap We Wanted to Avoid

Our past experiences with AI agents had taught us something: it’s incredibly easy to end up working for the agent instead of the other way around. We’d seen the pattern. You become a bottleneck because the agent waits for you to validate every step. You run things manually to compensate for what it can’t do. You spend too much time reviewing its code. You spend too much time re-reading its code. And everything you didn’t catch comes back to bite you later: bugs, tech debt, unmaintainable code.

So going into this experiment, we had one rule: the AI works for us, not the other way around.

It’s a trap! If your agent asks for screenshots, it’s outsourcing the boring part to you.

— Wassel (@wasselovski) February 18, 2026

Make it set up its own feedback loop (Playwright/tooling) so it can observe, change, verify, repeat.

What Actually Helped

We deliberately didn’t adopt a framework. Not out of laziness. We wanted to learn. We didn’t want to invest weeks mastering some tool that would be obsolete the next time an AI bro tweets or Anthropic ships an update. We wanted to understand, from the ground up, how AI can actually help us with our real problems. And along the way, we got to relearn our own craft. Honestly, it’s been a lot of fun.

We just kept asking ourselves one question: why is the agent asking me to do this, and how do I make sure it never has to again?

Three things made the biggest difference.

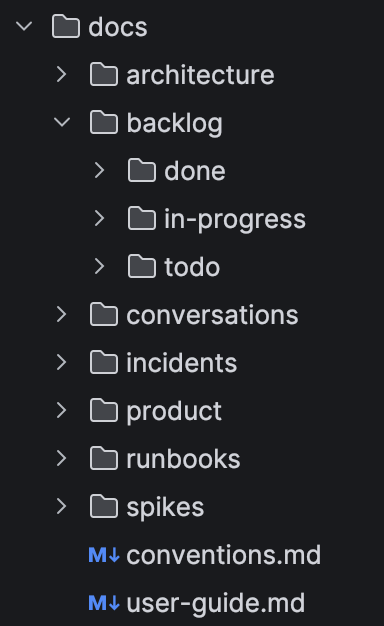

Putting everything in the repo

Not just code. Architecture docs, product vision, backlog, conventions, past conversations, incident reports, runbooks. If a new teammate would need it on day one, the agent needs it too.

We have a docs/ folder at the root with subfolders for architecture, backlog (todo / in-progress / done), conversations, incidents, product specs, runbooks, and spikes. Plus a conventions.md and a user-guide.md. One AGENTS.md file (symlinked to CLAUDE.md) ties it all together.

We didn’t start with all of this. Our first commit had a value proposition, a system design, our first conversation, and an initial backlog. The rest grew from there, one piece at a time, as we realized what the agent kept needing. The more context it had, the less we had to explain, repeat, and correct. That alone was a huge shift.

Just talk to the model

No elaborate prompt engineering. No system. You have a conversation, you iterate, you ask it what it understood, you correct.

The mindset that changed everything for us: sit back. Every time the agent tries to get you to do work for it, it’s a scam. It wants you to run something? Push back. It wants a screenshot of what happened? Push back. It wants you to check if the tests pass? That’s its job. Tell it to figure it out. It usually can.

The three-step loop

Our entire workflow boils down to this:

- Tell it to do the work. Hold firm when it tries to hand micro-tasks back to you.

- Tell it to verify its own work. Tests, types, deploy, logs. Not you.

- Ask: “What was painful and how do we avoid it next time?” The answer goes into the docs, the AGENTS.md, the justfile (a simple command runner, where you can automate useful commands). Next time, that friction is gone.

Tell it to do. Tell it to verify. Tell it to learn. That’s the whole thing.

Then it snowballs

Here’s what surprised us: we started with almost nothing. One agent, a terminal, and a conversation. But because step 3 keeps feeding improvements back into the system, things grow fast.

The foundation: everything can run locally. The system, the individual components in isolation, and the full check chain (lints, type checks, unit tests, integration, e2e, smoke). During development, the agent starts what it needs, checks what it needs, and iterates on its own, with minimal dependency on CI, on cloud infrastructure, or on us.

Early on the agent couldn’t act on anything outside the code, so we gave it CLIs: gh for GitHub, aws cli for infra, just for repetitive tasks. Then it couldn’t test anything visually, so we added Playwright. Then it needed safe feedback on infrastructure and environment changes, so we put all our infra as code in Terraform and gave it the ability to spawn an isolated production-like environment when needed. Then it kept making the same mistakes on infra, so we wrote conventions and runbooks. Each time it needed us for something it should have handled alone, we fixed the gap.

Within a few days, what started as “just talk to the model” had organically grown into a setup with real feedback loops: lint and type checks running automatically, unit tests written and executed by the agent, Playwright for visual verification, autonomous deploys, log access for self-debugging. None of this was planned upfront. It just accumulated, one friction point at a time.

Introspection is the new debug. When your agent can inspect its own state and reasoning, you spend less time debugging and more time steering.

We started with a single coding agent. Alongside it, we had other agents running for different jobs: production monitoring, activity tracking, documentation. But for code, just one. We wanted throughput, not chaos. The ecosystem is full of tools for running parallel coding agents, but spawning five that step on each other or break production every other deploy didn’t appeal to us when real users rely on the product every day.

Now, with more experience, we run two or three coding agents in parallel. But only because we have a better understanding now that allowed us to design independent workstreams aligned with our business. We earned that gradually.

Where We Are Now

Some numbers for context. We’re a team of two cofounders. We do everything: dev, product, user interviews, sales, discovery. We have hundreds of users in production, most of them daily active. We run zero-downtime deployments with real performance and availability constraints.

With this setup, each of us ships about two meaningful increments per day. These are increments that used to take me one to four days each, and I have 17 years of experience. I never stopped coding or designing architecture.

Less than 10% of the code gets reviewed by a human. That sounds scary, but it works because we invested in the process around it: the feedback loops, the context, the tooling. We focus on the delivery system rather than manually inspecting every output.

Some of these principles we’d already been applying on other products we develop. But launching umans code in February gave us a reason to push further, on a fresh repo where everything lives together: frontend, backend, infra as code, and all the documentation the agent needs.

What Was Hard

We’ve painted the picture of what worked. Here’s what didn’t, or at least, what still takes effort.

Instruction following is still unreliable

It’s better than it was six months ago. It keeps improving. But every model we’ve used still has misses. Rules in the AGENTS.md that get ignored this time. A skill file it loaded yesterday but skips today. And it gets worse after compaction: when the session gets long, the context gets summarized, and that’s when instructions start falling off. Things that were crystal clear at the start of the session quietly disappear. You have to stay alert, and you have to design your process assuming the agent will occasionally drop instructions.

The agent doesn’t know what it doesn’t know

Sometimes the agent doesn’t realize it has blind spots. It won’t tell you it’s missing context. It’ll just make something up or take a suboptimal path. So we stay attentive. We watch for gaps and keep adjusting the activable context we put at its disposal: docs, conventions, runbooks, skills. This evolves fast. What worked last week might not be enough this week because the product moved.

Tests matter more than the code

As the product evolves (and it evolves fast) our testing strategy has to evolve with it. The types of tests change. Our role, more often than not, was to guide that evolution through conversation: asking the agent to update the architecture docs, the decisions we take and he documents, the conventions, and the test rules that go with them. We care more about the test suite being right than the implementation being right. If the tests are solid, the implementation can always be fixed.

Deciding what to build

The agent can build anything you ask. That’s exactly the problem. It won’t tell you you’re building the wrong thing. Product decisions (what to prioritize, what to cut, what to validate first) are still entirely on us. That’s not a limitation of the tooling. It’s the job.

Sizing the increments

We are explicit about what makes a good increment. Each one must be shippable (can be deployed independently), valuable (delivers real user or business value), testable (has clear verification criteria), simple (small enough to complete in one session), and validating (has explicit assumptions to confirm).

Simplest thing that might work

The agent’s first implementation is not always the simplest. Sometimes it over-engineers, adds abstractions too early, or picks a more complex approach when a straightforward one exists. This matters more than it sounds. Every bit of unjustified complexity weighs on the next context window. Heavier context means slower reasoning, more mistakes, worse execution. We’ve learned to push back early: is this the simplest thing that could work? If not, simplify before moving on.

What’s Next

This article is about the principles. In a follow-up, we’ll go concrete: how we structure the backlog, how preview apps work, how we build and share agent skills, the day-to-day mechanics.

For now, if you’re drowning in AI tooling announcements and Twitter flex posts, maybe try this: one agent, one repo, one loop. Sit back, give it context, and stop doing its job for it. You’ll be surprised how fast things compound.

We’ll also share repos to practice on and packaged skills soon. Stay tuned.

Related

External perspectives

-

Steve Yegge on AI Agents and the future of software engineering (the practices in this article map to Yegge’s level 5) https://newsletter.pragmaticengineer.com/p/steve-yegge-on-ai-agents-and-the

-

OpenAI, Harness Engineering https://openai.com/index/harness-engineering/